Filtering Using Robots.txt

On reports where the Source URL is present, The Crawl Tool provides a special kind of filter to allow you to see what pages would be allowed or blocked by a robots.txt, according to the code from Google's googlebot.

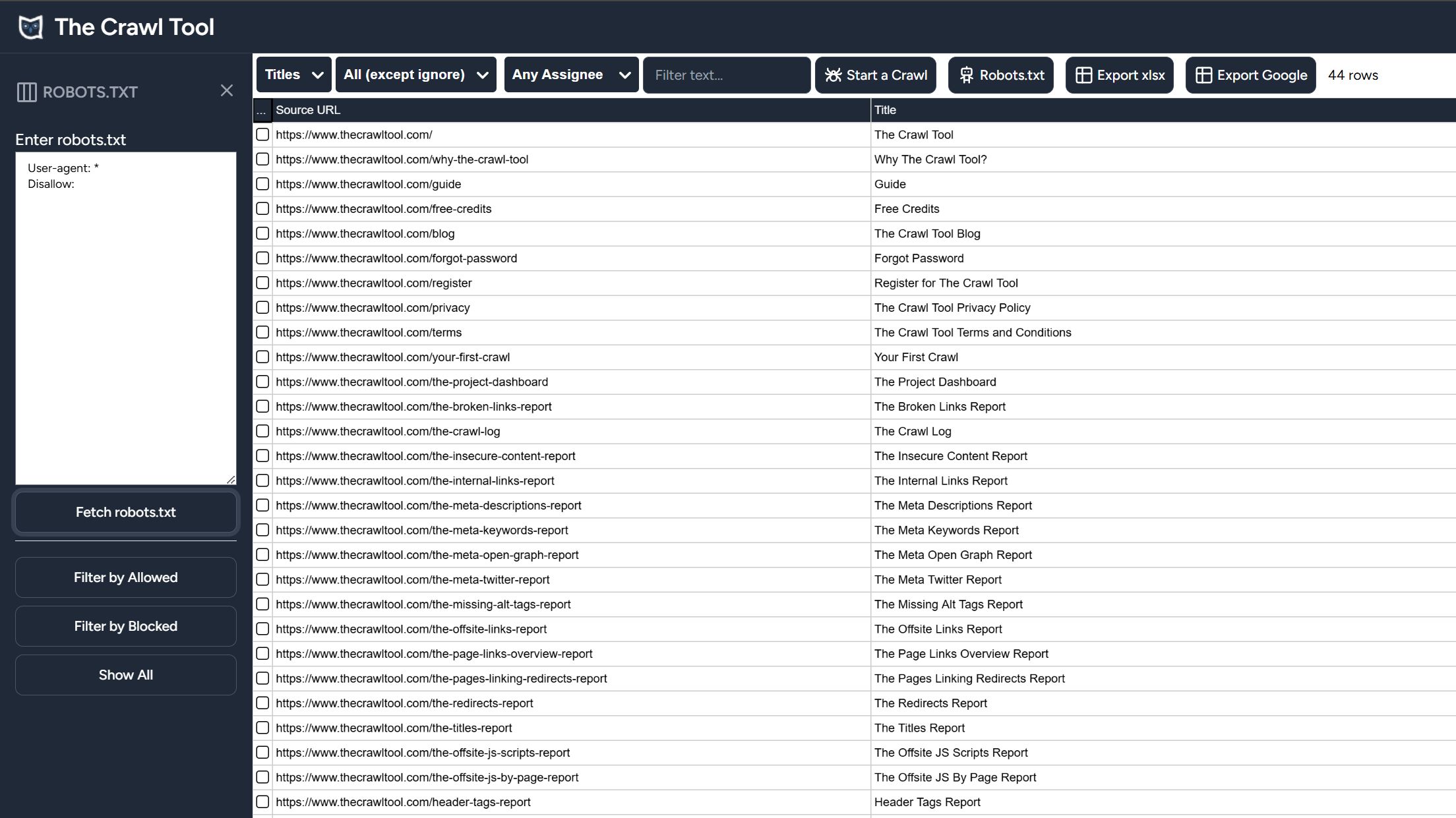

A button titled "Robots.txt" appears at the top. If you click on it then a side panel will open on the left hand side for the various options.

Enter robots.txt

The "Enter robots.txt" textbox allows you to write or paste in a robots.txt file to use. This is useful to check if a robots.txt file or changes will work as expected before actually making it live on your site.

Fetch robots.txt

The fetch robots.txt button grabs the robots.txt from the live site and fills it into the "Enter robots.txt" textbox for you. Useful to analyse the current situation and it saves time when you want to test changes.

Filter by Allowed

Clicking this button applies a filter to the data that will only show the lines where robots are allowed to crawl the pages.

Filter by Blocked

Clicking this button applies a filter to the data that will only show lines that robots are blocked from crawling. This is useful to easily see what you are excluding.

Show All

Click this button will turn off any previous "Filter by Allowed" or "Filter by Blocked" to show all data lines irrespective of the robots.txt